This is second article in the series of Cassandra Data Modeling best practices for efficient Spark SQL Joins. You can find previous posts here and here.

Dimensional data modeling principles are still applicable when joining Cassandra tables using Spark SQL. However, we have to apply certain additional techniques due to the nature how Cassandra database works.

Since there is abundance of information on typical dimensional modeling approaches, I will be focusing more on the Cassandra and Spark SQL aspects of the modeling process. If you are new to dimensional modeling you can visit kimball site or do a quick search on dimensional modeling.

Denormalization

Principle #1: Minimize number of tables in Star Schema

Avoid snow flakes through denormalization. Keep the number of tables in as minimum as possible. As you have seen in Spark SQL article referenced above, having more tables in Join operation will add more shuffling and increases overall time of query execution.

Principle #2: Avoid outer join relationships in the Star Schema

Use denormalization to remove outer join relationships. Spark SQL doesn’t pass filters to outer joining table (at least in version 1.4). So, outer-joining table will always become a full table scan.

Cassandra Specific Data Modeling Aspects – Partition Keys and Cluster Keys

Principle #3: Pick right partition keys to minimize number of partitions in Cassandra tables and apply filters in Cassandra as much as possible

Selecting right partition keys is the most important aspect of the data modeling process. In general, good Cassandra table partition key/keys meet following criteria.

Low but not too Low Cardinality: These keys enable you to store more rows in single partition. Most often these columns are used in Group By’s of your queries.

Used in Report filters: These keys are most common mandatory filters in your queries. Spark SQL queries much more efficient when partition predicates are pushed down to Cassandra database. This will help minimize the amount data flowing between Cassandra and Spark. Hence, more efficient compute resource utilization.

Non updatable: These columns are not updated.

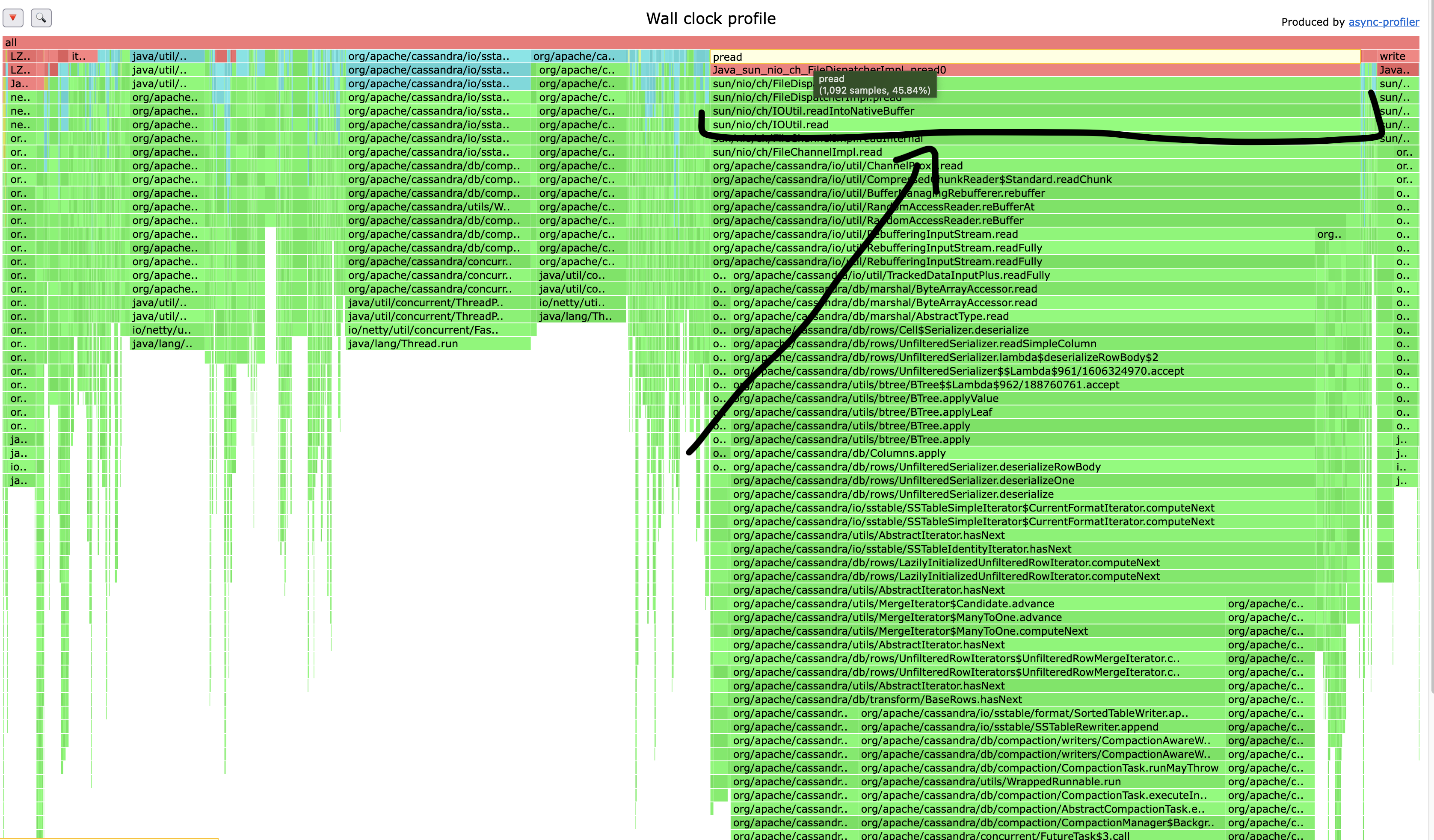

Principle #4: Limit your max partition size to 1 GB

Cassandra read performance will suffer once your partition sizes start to grow beyond 1 GB.

Principle #5: Make sure partition growth is contained. Add computed columns to shard partitions if necessary

Depending on your business process and data model, you need to take preventive measures to ensure data in partitions cannot grow beyond limits. This may require adding extra columns to your partition keys to contain growth.

Ex: Adding a month as partition key on a transaction table ensure that new partitions are created every month.

Principle #6: Optimize number of partitions in your table

Continue to tweak your partition key list until your max partition size reaches 1 GB limit. This will ensure that your table will have optimal number of partitions. Having fewer partitions will lead to fewer Spark tasks during SQL execution.

It may not be feasible to have all partitions in table to have 1 GB size. This will not happen in 99% of data modeling situations. Your model will be fine as long as max partition in table is around 1 GB limit.

In some situations it may not be feasible to achieve 1 GB max partition size. This is OK. Main point here is to maximize the number of rows you can fit in a partition as much as possible.

Clustering Keys

Principle #7: Choose additional columns that make up primary keys to uniquely identify rows in a partition

Principle #8: Avoid picking updatable columns as cluster keys

Just like in relational tables, Cassandra primary keys are not updatable. Under the covers, Cassandra update is same as insert. So, updating keys will create a new row. Design appropriate application logic to implement updates if necessary.

Principle #9: Leverage predicate pushdown capability on cluster key columns

Ensure to keep most frequently filtered columns in the cluster key list at the top. Some time if your table has non-updatable columns that are used to filter rows most often, you can include them as part of cluster keys even if they are not required to define uniqueness of rows.